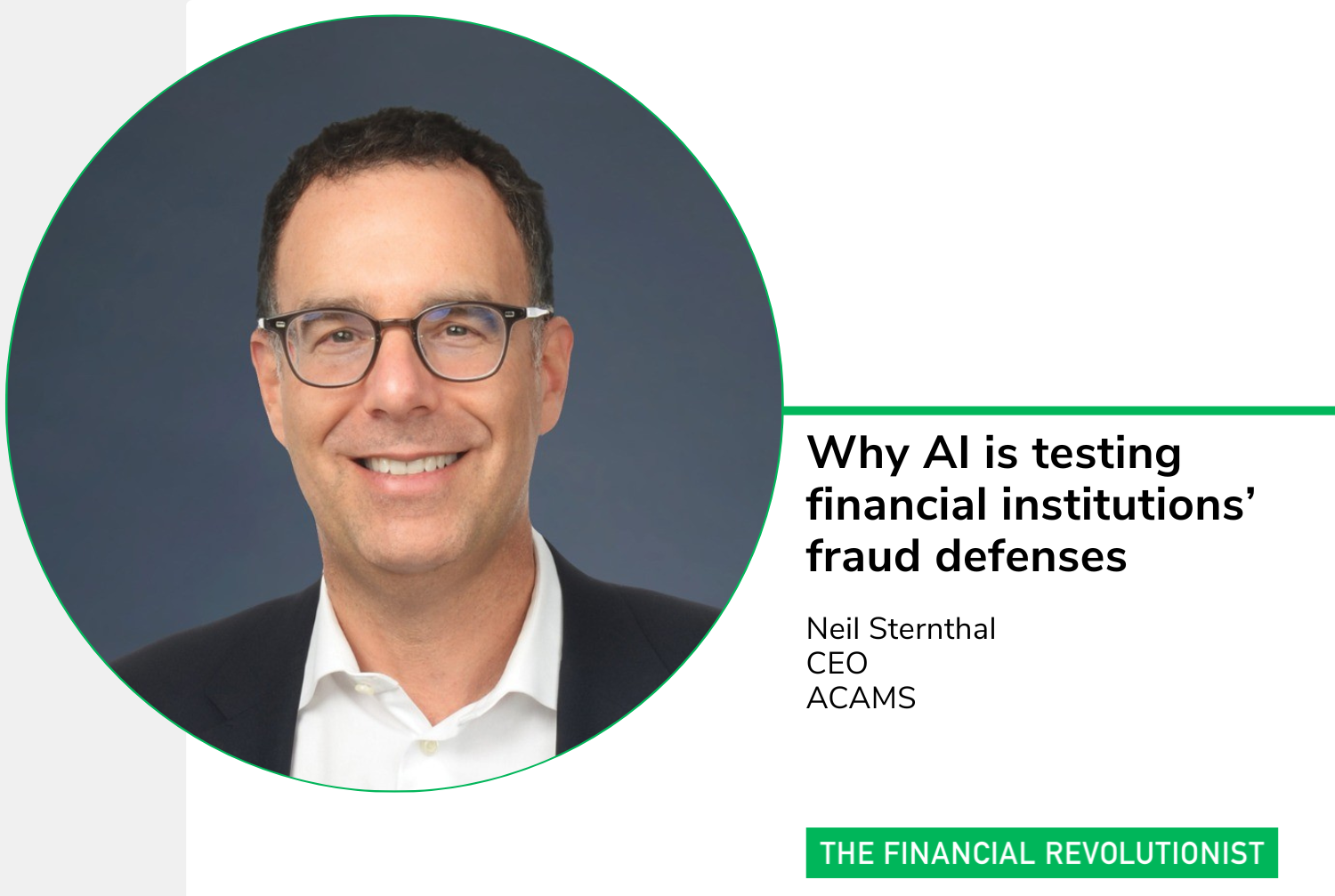

Why AI is testing financial institutions’ fraud defenses

/The new era of AI-generated fraud is here, with deepfakes and other AI-enabled techniques scaling rapidly. AI-generated fraud raises the prospect of catastrophic damage to organizations, driven by attacks that require far less planning and technical expertise than in the past.

In the 2026 Global Anti-Financial Crime Threats report from ACAMS, a certification and intelligence provider for the anti-financial crime (AFC) community, 75% of anti-financial-crime professionals said the malicious use of generative AI poses a high or very high risk to their programs.

Based on responses from nearly 1,400 AFC professionals across more than 200 jurisdictions, the report shows financial institutions’ anti-fraud capabilities coming under new tests from emerging threats. Eighty-four percent of respondents say they are now focused on scams and fraud targeting individuals, while 79% cite sanctions and export-control evasion as a top concern. Internal constraints are significant: just over half of respondents say outdated data and legacy IT systems pose a high or very high risk to their financial crime programs, even as more than half report they are already using AI tools in their compliance operations.

The FR sat down with Neil Sternthal, CEO of ACAMS, to discuss the growing threat of AI-enabled fraud and how the industry is responding.

How has the accelerating pace and sophistication of financial crime changed the risk landscape for both financial institutions and everyday consumers?

AI has changed everything. It’s not just the tools criminals use, but the speed and scale at which they change and operate.

Financial crime today is driven by well-funded, highly coordinated networks that move across borders and channels faster than ever before. Technology makes deception increasingly convincing and harder to detect. That's a dangerous combination, and it will take an entire community to address it.

In our latest anti-financial crime threats report, the malicious use of generative AI came out as the most significant threat facing this community. What that means in practice is more convincing scams, faster fraud, greater damage. For consumers, everyday habits now carry real risk. For organizations, the exposure is financial losses from fraud, regulatory scrutiny, operational disruption, and reputational harm.

The AFC community must respond by being ever more proactive.

How can the industry rebuild public trust in a world where scams, deepfakes and account takeovers are becoming everyday threats?

Scams and deepfakes are now no longer outliers. They're part of everyday life. AI makes it easier to manipulate voices, images, videos and behavior at scale. Our defenses need to evolve just as fast.

Rebuilding trust requires strategic intent. Organizations need to change from siloed controls to enterprise-wide financial crime capabilities, real-time intelligence, advanced analytics and cross-channel data.

And resilience isn't just about prevention — it's also about response, clear accountability, disciplined decision-making and scalable remediation. That's what protects organizations as risks evolve.

Consumers also need practical support. Simple habits that can get in the way of scams that are in process. For example, slow down when you get an urgent message. Verify requests through a second channel. Treat unexpected requests as suspicious by default. Trust in the financial system isn't built on speed or access alone. It's built on the strength, consistency, and credibility of institutional safeguards.

Financial crime is now too large and fast-moving for any one institution to tackle alone. What role does community and information-sharing play in closing that gap?

Bad actors operate as networks, so we need to as well.

When criminals move funds across borders, ownership structures, fiat currencies and digital assets, no single institution sees the full picture. We only catch fragments unless we work together.

Regulators understand this. In the U.S., the USA PATRIOT Act gave financial institutions a safe harbor to share information and collaborate to identify illicit activity. More recently, FinCEN issued guidance encouraging financial institutions to share information across borders while reinforcing SAR confidentiality. In the EU, Article 75 of the AML Regulation is designed to break down information silos. That's progress. But more can be done.

What does effective collaboration between regulators, banks, law enforcement and the private sector actually look like when it comes to stopping financial crime?

Institutions see patterns. Regulators clarify expectations. Private organizations bring advanced technology and analytics. Law enforcement can prioritize and act in ways that weren't possible before.

Just as importantly, collaboration shifts us from only being reactive. We're not just stopping individual transactions; we're dismantling entire criminal systems. That's why community matters so much in the AFC community, and why ACAMS prioritizes knowledge-sharing around the world.

One example: our International Anti-Fraud & Technology Task Force, launched in November 2025, brings together government agencies, major international banks, and financial and non-financial operators.

Some may describe industry forums and association events as talking shops. What value do you think they provide to practitioners?

With the rapid advances in AI and constantly changing global regulatory environment, AFC practitioners are challenged not just with staying up-to-date but actually staying ahead to prevent crimes before they happen.

But it’s hard to do that by yourself. These forums are designed to bring the community together and share knowledge. The goal is to create a space where practitioners can compare notes, pressure-test scenarios and think through how emerging risks translate into real-world decisions. Bringing together people from different regions, roles and sectors helps surface what’s actually working, where frameworks are being tested, and what may be coming next.